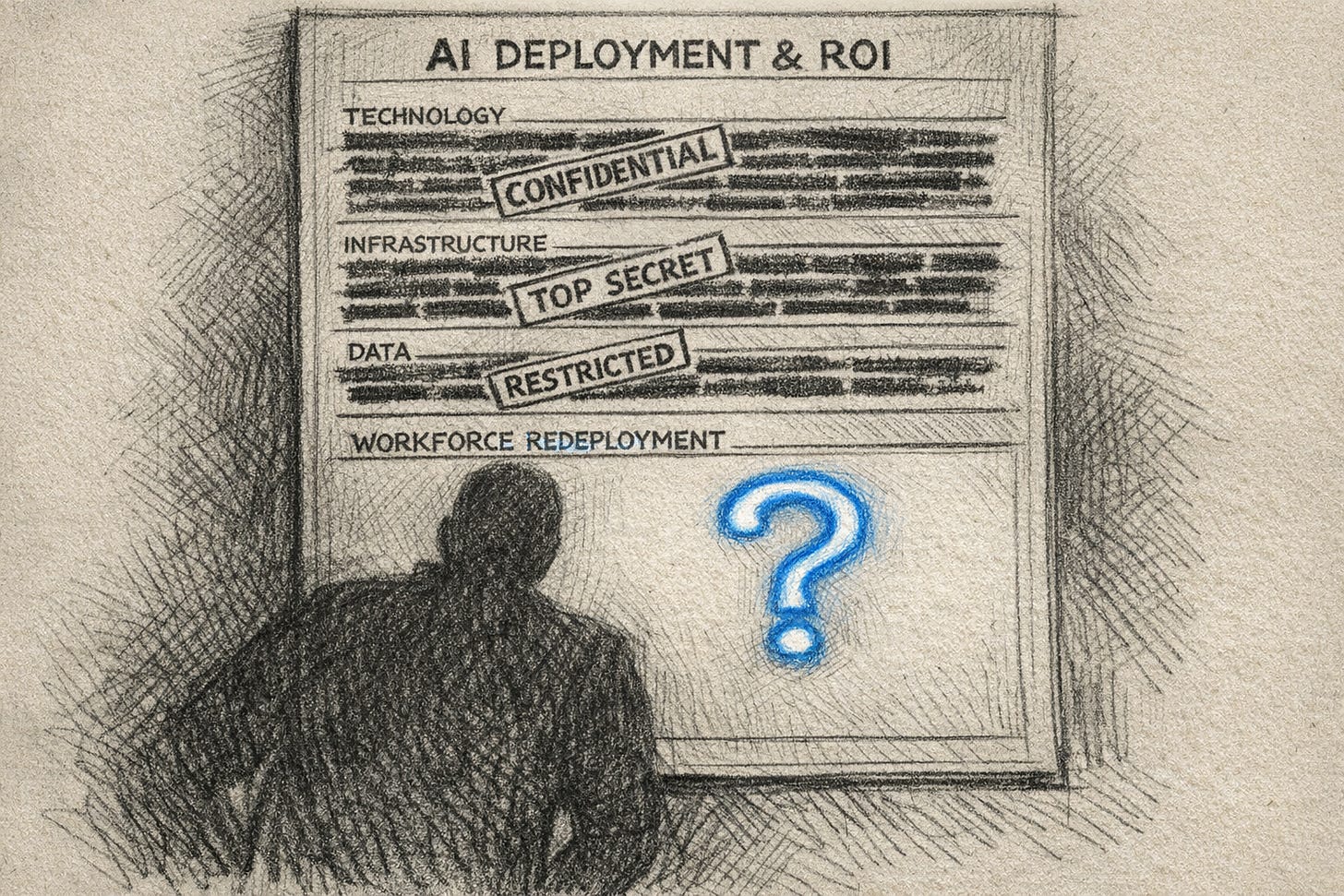

We gained the productivity. Now what?

The missing clause that turns AI efficiency into sabotage when leaders refuse to answer the only question that matters

TL;DR

The AI pilots create an efficiency narrative. But employees are actively sabotaging AI output and efficiency, as it threatens to replace them.

Executives stay silent about job survival because the stock market pays for headcount cuts, not redeployment.

That silence destroys the technology investment.

The only way to secure the promised returns is a hard and verifiable workforce redeployment clause.

Leave the social contract blank, and workers will fill it with sabotage.

This is the fourth and final Signal in a series on where enterprise AI actually dies.

1. The last mile mapped the missing Production Layer.

2. Productivity layoffs mapped the structural incentive to cut headcounts rather than redeploy.

3. One-way doors and Friction Factories mapped how the consulting industry industrialised the removal of the wrong frictions.

Next: a comprehensive Methodology piece synthesising this entire arc into a single operational playbook for the C-suite.

The productivity is real

The hours are being saved. The cycles are compressing. The ROI decks have actual numbers in them now, no longer a projection.

And 29% of the people generating those numbers are quietly trying to break the system.

That is the number from Writer's 2026 enterprise survey. Nearly a third of all employees. Among GenZ, it's 44%. They feed company data into public models.

They ignore the mandated tools. They produce just enough output to look compliant and make the AI look broken.

Sixty percent of executives respond by threatening to fire them.

So the organisation runs this loop: AI delivers efficiency, workers sabotage it, leaders threaten retaliation. The loop runs again.

At no point does anyone in the leadership team ask the one question that would end it.

What happens to our people?

A named role. A date. A commitment with a signature on it.

The silence

Most leaders would say they simply haven’t gotten there yet. The rollout programme is still early. The redeployment plan is in progress. The communication will come.

No, it won’t. Here’s why.

The stock market pays for the announcement of AI-driven efficiency, not for the evidence of it. Goldman Sachs ran the numbers and could not detect the productivity gains in aggregate economic output. Yet share prices move as soon as the headcount cut is announced. The incentive is to let the “efficiency narrative” do its work and let the headcount interpretation follow quietly, instead of answering the question “WHAT HAPPENS TO MY PEOPLE?”

Employees around the world read this.

They saw Oracle execute a 30,000-person exit via a 6am mass email, framed as funding the AI future. Or Snap cut 16% of its workforce and call it an AI pivot. Atlassian. WiseTech. The pattern is there and very visible.

Workers are running pattern recognition on their own survival, because every announcement where “efficiency” precedes “headcount” is a data point.

And leadership’s silence is like the Oracle play in slower motion.

This is why the sabotage numbers are not surprising. They are rational output of rational read of the very incentive structure that have never been challenged or seriously disrupted.

Sabotage is the shadow Production Layer

In The last mile, I explained that AI dies in the “last mile” because organisations never built the Production Layer - the verification, governance and operating model design that turns AI output into actual value.

In Friction Factories, I mapped how four consulting archetypes industrialised the removal of the wrong frictions - cognitive and operational - while leaving accountability friction unbuilt.

What the Writer survey shows is that workers have built their own version of the missing layer.

The three sabotage behaviours map perfectly onto the three friction types:

Ignoring mandated tools and reverting to manual workarounds re-inserts operational friction. The shock absorber that was removed by AI goes back in. Clumsily, invisibly, at the cost of the very efficiency the rollout was supposed to deliver.

Feeding company data into public models strips accountability friction recklessly. The audit trail disappears. The IP exposure is real and dangerous. The employee however does not care about compliance. It’s only about surviving the next restructuring wave by being or re-becoming indispensable.

Producing low-quality AI output deliberately to make the system look broken actively weaponises cognitive friction. It forces the decision back to a human. It keeps the human in the loop not because governance requires it, but because the human needs to remain necessary.

This is System 2 work. Employees are doing the governance, the verification, the friction management that the Production Layer was supposed to do. Except they are doing it in the wrong direction, to protect themselves rather than to protect the organisation.

When you ask why AI keeps dying in the last mile, this is part of the answer. The missing Production Layer gets filled by the people whose jobs are threatened. And they fill it with resistance.

The cost of missed social contract

Deloitte’s 2026 Human Capital Trends report measured the cost of leaving that question unanswered. Tech-first organisations - the ones that lead with tooling and treat workforce design as something to sort out later - are 1.6× more likely to miss their AI returns.

Nearly 80% of enterprises report significant adoption challenges, despite having the tools deployed.

And a third of the workforce is actively making the AI look worse than it is.

Put those three numbers together and the answer is the same: the tools work.

But the people have already decided this is not going to work for them. So the pilot succeeds. Because it is watched, it is managed, everyone is on their best behaviour. Then the rollout hits the organisation at scale. Nobody is watching. And promised results are simply not there anymore.

The efficiency narrative was real. The actual transformation is not.

The contract

Companies spend 93% of their AI budget on tech, and only 7% on people.

The reason they fail is sitting in that 93/7 split.

If you buy a productivity tool but do not answer what happens to the people using it, a third of your workforce will actively try to break it.

You are using the “93% tech budget” to fund a system that your neglected “7% people budget” is actively sabotaging.

The companies that actually get a return on AI are human-centric. Because they treat the social contract with their workforce as the protection on their tech investment.

And to protect this investment, the contract needs four answers. In writing. With a name on it. Before the rollout scales.

Which roles absorb the reclaimed capacity, and by when

Specific titles on a published internal ladder. Specific timelines. A promise to “invest in our people” is a press release. “Agent orchestrator role by Q3” is a verifiable contract.

What fraction of freed hours goes to new value creation vs cost reduction

If leadership cannot answer this with a hard number, the answer is “ZERO going to creation and ALL OF IT going to the efficiency line = headcount cuts”.

Workers already know this. The only person pretending otherwise is the head of HR presenting the upskilling modules.

What sequence is taken before the exit option

The organisation commits that for a defined window of 18 or 24 months - any role eliminated by AI efficiency goes through an internal redeployment/reskilling cycle first. The exit is not the first or default move. The organisation has to prove it tried to keep the person before it cuts the headcount.

What leadership will actually track

Four numbers, reported quarterly, by every business unit: hours reclaimed, where they went and headcount change broken down by redeployment versus AI-attributed exit. If leadership cannot see where the freed hours went, the CFO will always default to cutting costs. Because cost is the only number visible on their dashboard.

These four answers are one-way doors if left empty. Fill them in, loudly, in writing, and the incentive to sabotage disappears. The employee is no longer forced to protect themselves.

The 90-day collapse tests

The Earnings Call Metric (The CFO test)

By the Q3 2026 earnings cycle (July/August), at least one Fortune 500 company to explicitly report “reclaimed capacity redeployed to revenue” alongside their AI cost savings. If Q3 earnings calls only tout headcount reductions and generic “efficiency,” the silence play is still winning and the market hasn’t priced in the 1.6x failure rate yet.

The Consulting Pivot (The friction test)

By September 2026, at least one MBB or Big 4 firm will launch a dedicated “AI Workforce Architecture” or “Human-Centric AI” practice where redeployment ratios - not just change management or upskilling - are the primary deliverable. If they are all still selling pure tool-deployment and friction-removal, they haven’t caught up to the sabotage problem.

The Labor Mandate (The worker test)

By September 2026, a major EU works council or US labor union to make “hard time allocation” and “named redeployment paths” a strike-level demand in a contract renewal. Workers are not going to wait for the CFO to volunteer this contract. If leadership leaves the blank, organized labor will force it.

If all three tests fail, the silence play continues to be rational and this article is early. If they hold, the fourth clause is becoming the price of admission. And the Production Layer finally includes the people it was supposed to serve.

My final ask

These Signals reflect conversations I am having with executives right now.

If this helped you see your organisation’s blind spots more clearly, do two things.

Key Sources

Scaling your human edge: The path to achieving value from AI, Writer 2026 State of Enterprise AI, Snap Is Laying Off 16% of Staff as It Embraces A.I., The last mile, Productivity layoffs, One-way doors, Friction Factories.

Very good analysis. I would add one thing: companies probably want to reduce simple administrative roles or simple team members. In my experience, however, the real problems are leaders who can neither contribute technically, nor in terms of domain expertise, methodology, or process design, let alone shape any of these. So the question is how efficient it will really be when you let people go, because the wrong people stay in.

I also think that if you genuinely redesign workflows with AI and properly dig into the subject matter, entirely new creative ideas for new business areas emerge, and then you need people again, the very people you may have just let go.

Perhaps in the end the winners will be the companies that are being built right now, setting up their organizational structure much flatter and process-oriented from the start, going AI-first from day one.

What you're describing from the inside of the organization, I've been watching from the outside — and the well metaphor holds from both angles. The difference is that from where I'm standing, I'm not sure the organization can be convinced to put the bucket down. Not because the math is wrong, but because the math is right on the wrong timescale. Quarterly reporting is a structural problem, not a persuasion problem.

The dog in the park isn't in the ROI deck. That's the whole issue.

https://peterrex1.substack.com/p/the-well-and-the-bucket?r=60rv9f