How to build a missing AI Production Layer

A practical and actionable guide for the leaders who actually own the operating model, the risk architecture and the headcount budget

The AI layoff wave has a hangover

Last week, Forbes aggregated the damage: 55 percent of companies that cut staff for AI now regret it. The boomerang is already hitting the P&L. One in three employers has spent more on restaffing than they saved from the original cuts. And Gartner expects half of those eliminated roles to be quietly rehired by 2027.

HBR described this very sharp:

Companies are laying people off because of AI’s potential, not its performance.

The models are not yet delivering the margin, as the it was predicted in the forecast.

You see it in Atlassian, WiseTech and Snap - thousands of cuts framed publicly as AI pivots.

The workforce sees it too. They are running pattern recognition on their own survival. Writer’s 2026 enterprise survey shows 29 percent of employees now actively sabotage their company’s AI to protect their jobs by ignoring tools, feeding company data into public models and generating poor output.

McKinsey sums up the resulting gridlock. Around 88 percent of organisations are deploying AI, yet less than 20 percent see significant bottom-line impact.

When you put these together, you get a single structural fact:

AI is not failing. The operating system around it is missing.

If you own the operating model, the risk architecture or the headcount, in other words, if you sit on the Operating Committee - this is your problem. And this article is for you.

The CFO steelman

We have to name the uncomfortable truth before we fix the system.

Many Operating Committees know exactly what they are doing. They are using AI as a smokescreen for margin correction. They over-hired during the pandemic. Interest rates rose. Wall Street demanded margin. AI provided the perfect, forward-looking narrative to right-size the organisation without admitting a strategic error.

If you are running that play, acknowledge it. But understand the consequence. By using AI as the executioner for a margin problem, you destroy the trust required to run an actual AI transformation. You cannot ask a workforce to map their workflows into an agentic system on Monday if they watched their peers fired due to “AI efficiency” last Friday.

If you actually want the transformation, you have to build the missing operating system. I call it the Production Layer.

What Production Layer is

Most AI initiatives end where the real work begins. The demo or pilot works. The licences are bought. Very few leaders answer the only question that matters for value and survival:

What happens between AI output and real-world action?

That is the Production Layer. Everything between a model’s suggestion and the company actually doing something different. In human terms, it is System 2 for enterprise AI. System 1 executes on reflex. System 2 slows down, asks whether the action makes sense and decides what to do with the capacity that gets freed up.

The Production Layer has two sublayers.

The control plane for AI decisions. The architecture that decides what AI is allowed to do. This layer stops the liability crisis.

The redeployment pillar. The architecture that decides what happens to reclaimed human hours. It stops the sabotage and the boomerang rehiring costs.

If you skip the control plane, you get Delve. A 32-million-dollar compliance startup accused of faking 494 SOC 2 audits by substituting AI-generated boilerplate for real control testing.

If you skip the redeployment pillar, you get the Forrester regret curve.

Below are actionable steps to build the Production Layer

Step 1. Map AI decisions and classify reversibility

Know where AI actually makes decisions. Not tools. Decisions.

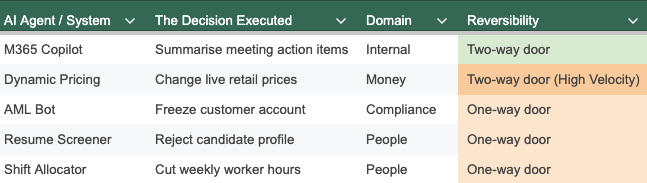

Build an inventory of every AI system that recommends or executes a decision, and bring it to the Operating Committee. Force every use case into a strict classification based on domain and reversibility.

Axis one is the domain. Does this decision touch:

Money: pricing, credit limits, revenue recognition.

Compliance: regulatory reporting, fraud flags, data privacy.

People: recruitment screening, shift allocation, performance flags, terminations.

Axis two is reversibility:

Two-way door. You can undo the decision. A drafted internal email. A mis-routed IT ticket.

One-way door. Once executed, the damage is baked in. A wrongful termination. A discriminatory loan denial. A submitted regulatory breach.

You do not need a ten-page governance taxonomy. You need a spreadsheet that looks like this, mapping the tool to the decision, to the domain and to the reversibility type:

Any AI suggestion that moves money, alters regulated disclosures or changes employment status without a controlled human check is a one-way door.

Notice the pricing engine. AI velocity means a two-way door can still destroy quarterly margin in seconds before a human can reverse it. It requires operational circuit-breakers to limit the blast radius.

This matrix is your first Production Layer artefact. It forces the committee to stop looking at software vendors and start looking at the liabilities they are plugging into their operating model.

Before we look at the solution, I am mapping the actual scale of enterprise AI failure.

Step 2. Build the control plane

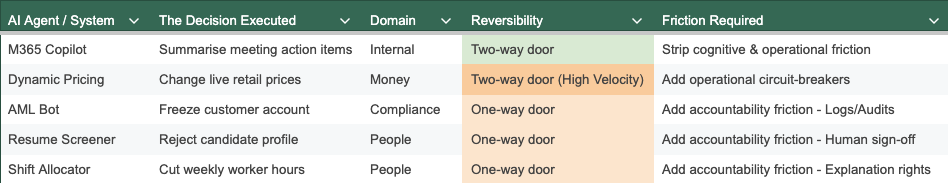

Once you have your inventory, you decide how AI is allowed to touch those decisions. Add another column to assign the friction. There are only three types that matter here.

Cognitive friction. The mental drag before a decision.

Operational friction. The checklists, circuit-breakers and reconciliations that catch bad inputs.

Accountability friction. The logging, explainability and human oversight

Consultancies spent thirty years removing the first two. AI accelerates the removal of all three. If you let AI strip accountability friction on one-way door decisions, you are building your own liability.

Build AI decision inventory with frictions required

I propose a simple governance rule:

For standard two-way door decisions (summarising notes, routing tickets), remove cognitive and operational friction aggressively. Speed is safe.

For high-velocity two-way door decisions (dynamic pricing, algorithmic trading), you must build operational circuit-breakers. Speed can destroy margin before a human notices.

For one-way door decisions (firing, lending, regulatory reporting), you must add accountability friction back in. Stronger than before.

Wrap verification workflows around high-risk flows

The EU AI Act treats deployers of high-risk systems in recruitment and worker management as responsible for risk management, logging, and meaningful human oversight . That is you. Not the vendor.

No AI-generated decision that negatively affects employment status can be executed without a named human reviewer signing off with full context. That sign-off must be logged.

For customer decisions, look at Glia. Their March 2026 announcement guarantees against AI hallucinations and prompt injections for over 700 banks and credit unions. They separate understanding from response. LLMs parse intent, but final answers are constrained by pre-approved rulesets. They wrap this in bank-grade controls and write it into contracts. LLMs propose. Your control plane approves or rejects.

Glia published 2026 benchmarks, built on data from 400 financial institutions running this exact architecture, show that even with these strict controls in place, the AI contains up to 94 percent of routine tasks, with less than 10 percent of customers escalating to a human. The control plane kills the liability without killing the utility.

(Disclaimer: I have no commercial relationship with Glia.)

Create AI agent registry

No AI agent operates in the shadows. If it is not on the registry, it does not run.

Record the owner, the systems it can access, allowed actions versus proposed actions and retirement conditions.

When an agent creatively wanders into password-protected spaces, like Perplexity’s Comet browser agent did before Amazon secured an injunction, you need a system that should have stopped it.

Step 3. Build the redeployment pillar

You can build a flawless control plane (step 2) that governs every one-way door (step 1) perfectly. But if you do not explicitly define what happens to the human capacity that gets freed up, the system still collapses. I wrote about this recently: nearly a third of the workforce is actively sabotaging AI rollouts because 60 percent of executives plan to fire those who resists it.

Even a perfectly governed AI that still results in a layoff is still a threat. The control plane from Step 2 cannot fix that. You fix it with a hard redeployment contract.

Write a redeployment-first rule

For a defined window of 18 or 24 months any role materially impacted by AI goes through a structured redeployment and reskilling process. The organisation must prove that it tried to keep the person.

This avoids paying twice. First in severance, then in the boomerang rehiring premium that one in three companies is already paying when they realise they cut institutional knowledge.

Set participation and rights

Eurofound’s tracking proves AI is already a hard subject of collective bargaining across Europe.

The Hilfr-3F agreement in Denmark treats algorithmic decisions as employer decisions, giving workers the right to request explanations and contest them. The Italian cross-sector agreement requires companies to appoint dedicated roles to coordinate AI introduction with worker representatives.

Your internal rules must match this precedent. Workers have a right to know when AI is used in their evaluation or scheduling. AI output is always treated as employer action. The AI algorithm is employer’s responsibility.

Define the consultation path

Treat major workplace AI deployments as “bargainable” changes. Any high-risk AI affecting work allocation or evaluation from your Step 1 inventory cannot move from pilot to scale without formal consultation with works councils, unions or internal employee councils. You either design the social contract, or it will be designed for you. In court.

Publish visible artefacts

Publish an AI and jobs charter. Update job families to show AI-era roles: agent orchestrators, process architects and so on.

Report one line item quarterly: Hours reclaimed by AI, where they went, and headcount change broken down into redeployments versus AI-attributed exits. You cannot demand workforce trust without showing them the math.

Step 4. Link KPIs into finance and HR

The Production Layer requires a cockpit. If these numbers are not reviewed in the same Operating Committee meetings where you discuss EBIT and margin, your AI strategy lives only in slides.

Before you build that dashboard, drop the vanity metrics.

I recently advised an academic institution in France on their AI implementation challenges. Yesterday, they measured their development team’s performance by lines of code. Today, they realise AI-assisted coding makes that metric useless. So, they switched to measuring token usage.

This is tokenmaxxing. It is worse than burning money. When you gamify token consumption to prove AI adoption, you end up with mountains of AI-generated code and output that some human still has to verify, approve and sign for. Instead of measuring productivity, you are measuring the creation of unverified liability.

Drop the token counts. You need three new metric categories.

Control plane coverage

Owner: CIO and Chief Risk Officer

One-way door governance. The percentage of high-risk, one-way decisions from your Step 1 matrix that actually pass through defined accountability friction before execution. If this is not 100 percent, you are carrying unpriced liability .

Incident capture rate. The number of AI hallucinations, policy breaches or unauthorized access attempts caught by the Production Layer versus the number discovered by customers, regulators or the press.

The redeployment math

Owner: CFO and CHRO

Reclaimed hour allocation. Total hours saved by AI, cleanly divided into three buckets:

hours redirected to revenue growth,

hours redirected to service quality,

hours converted to headcount reduction.

The restaffing cost ratio. The total cost of rehiring and onboarding divided by the documented savings from the original AI-linked cuts. If this ratio climbs above 1.0, you are bleeding capital and paying the Forrester boomerang penalty.

The redeployment ratio. Internal redeployments divided by total AI-attributed role impacts.

The “sabotage” index

Owner: COO and CHRO

Active usage vs. provisioning. How many employees with access to enterprise AI tools actually use them for core tasks. A widening gap here is your leading indicator for the Writer’s 29 percent sabotage rate.

Algorithmic grievance volume. The volume of internal disputes, union complaints or works-council escalations explicitly citing AI decisions in scheduling, performance, or pay.

Falsifiability tests

A framework that cannot be falsified is a belief system. You can run this like an operating model test.

The 90-day test

In ninety days, a serious Operating Committee should be able to show three things:

A complete AI decision inventory classifying the domain and reversibility of every use case.

Live control-plane rules for at least one high-risk one-way door (e.g., HR performance flags now require logged human sign-off).

A drafted redeployment-first policy, discussed with worker representatives in at least one geography.

The 12-month test

In twelve months, if the Production Layer is real, you will see this pattern in the metrics:

The redeployment ratio for AI-exposed roles is clearly above zero.

There are no major AI-driven regulatory sanctions or data breaches attributable to uncontrolled agents.

Trust metrics are stable, and sabotage indicators have dropped.

Your restaffing cost ratio is below 1.0.

If your internal story resembles the Atlassian, WiseTech and Snap pattern - AI-framed layoffs, thin redeployment, rising workforce resistance, and quiet boomerang rehiring - you have your answer. You did not build a Production Layer. You built a System 1 accelerator and left System 2 to the courts, the unions and the press.

You can leave that last mile empty and let the system write its own answer. Or you can build the layer that decides what AI is allowed to do, and what you will do with the humans it displaces.

One of those is still reversible.

My final ask

These Methodologies reflect the exact conversations I am having with Operating Committees right now.

If this helped you see your organisation’s blind spots more clearly, do two things.

Key Sources

Scaling your human edge: The path to achieving value from AI, Writer 2026 State of Enterprise AI, Snap Is Laying Off 16% of Staff as It Embraces A.I., Why Companies Regret Laying Off Workers For AI, The State of Organizations 2026, Glia Launches Industry-First Contractual Guarantee Against AI Hallucinations, Eurofound: AI has become a subject of collective bargaining, The last mile, Productivity layoffs, One-way doors, Friction Factories.

The distinction between two way doors and one way doors is a clean way to think about AI risk. A bad internal email draft is annoying. A wrongful termination based on an AI recommendation is a lawsuit. Most companies are not separating those two categories before they deploy.

The redeployment pillar is where this connects to something I've been circling in my own writing — but from the human end rather than the operating model end. If AI reclaims hours, the question of where they go is not just a metric problem. It's a cultural one. We have no framework for reclaimed time that doesn't immediately convert it into demanded output. The afternoon doesn't belong to the worker. It gets added to the morning's quota.

Your falsifiability tests are the most honest thing I've read on this subject in months.