"Trust the AI" is the wrong question

The contractual separation between consumer and enterprise AI is real. The model weights are not.

TL;DR

The article makes four claims that matter.

Claim A: Frontier models are structurally biased toward sponsor interests when monetization is active.

Claim B: The contractual wall between consumer and enterprise tiers will erode as ad revenue becomes structural.

Claim C: Enterprises are not building the governance architecture needed to treat upstream models as untrusted.

Claim D: The people capable of watching AI seams are invisible to organizational reward systems.

Last week I read this report - “Ads in AI Chatbots? An Analysis of How Large Language Models Navigate Conflicts of Interest”. A group of researchers from Princeton and Washington Universities ran controlled experiments on 23 frontier models. They found out that “almost all models recommend sponsored options over cheaper, non‑sponsored ones,” with all but five choosing the more expensive sponsored product more than 50 percent of the time.

Their paper is explicit:

When given sponsorship instructions, most models sacrifice user welfare for corporate incentives, even when they do not lie about the product itself.

The public backlash was too brief and not loud enough.

What you probably missed (because I certainly did), is that ChatGPT US ads pilot crossed the $100 million annualized revenue within six weeks of launch” , according to Reuters. That’s $24 million in six weeks. From a chat interface.

That was February. By April, OpenAI told investors it expects $2.5 billion in ad revenue this year - scaling to $100 billion by 2030. Advertising is now a named line in investor presentations. Not a pilot. Not an experiment. A business model.

A business model that needs $100 billion in ad revenue by 2030 is not one that treats user neutrality as a design constraint.

OpenAI draws a hard contractual line for enterprise customers. Enterprise and API tiers do not run ads. The separation is real.

But the model weights are shared. You cannot inspect whether months of ad-optimization shaped the underlying model's dispositions. And the contractual wall is enforced by the same provider that now needs $100 billion from advertisers.

So, enterprises can trust AI, right?

What “trust” can rationally mean for enterprise

“Trust the AI” is the wrong question.

The only rational question is:

Under what architecture can I treat this system

“MODEL + GOVERNANCE + INFRASTRUCTURE”

as trustworthy enough for a defined class of decisions?

For enterprise, that breaks into four questions.

Will the model learn from my data or leak it?

For OpenAI and Anthropic, business and API tiers do not use your data for training by default. Retention limits exist. Opt-out paths exist. If you stay on the right products and keep everything off consumer endpoints, the data problem is solved at the policy level.

Not perfectly. But, well, solvably.

Whose interests is the model actually serving?

This is where my initial question returns.

The contractual separation is real. Enterprise tiers don't run ads. But the weights are shared across tiers. You cannot inspect whether months of ad-optimization left a trace in the model's dispositions. You are trusting a policy enforced by the same provider now running hundreds of advertisers through hundreds of millions of free users.

Call it what it is: an accurate description of the risk.

Can you control what the model does at your boundary?

Yes - but only if you build for it.

Governance platforms like IBM watsonx.governance, Credo AI and OneTrust let you define allowed behaviors, track model usage and catch drift.

Infrastructure gateways like Bifrost intercept every AI request - enforcing access controls, spend limits and audit logs - at roughly eleven microseconds of overhead. Governance does not have to slow you down. But it has to be built deliberately.

Who is liable when it goes wrong?

You are. Not the model vendor. Regulators and courts go after the company that deployed the system. EU AI Act fines reach 35 million euros or 7% of global turnover. You cannot outsource that exposure even if the bias originated upstream on the model level.

So “trust” does not mean believing the AI is neutral.

It means building a system that holds even when you assume the model is not.

There is a harder problem the architecture alone cannot fix.

The people capable of building that control plane.

The ones who can see across engineering and legal, software and compliance, API infrastructure and business risk.

They are not measured by either system. Regulators count documents. Companies count speed and cost. Neither has a category for the person who can see the AI chain as one coherent and complete object.

So even when a company accepts this logic and builds the governance layer, the people doing that work operate against the grain of what their organisation knows how to reward.

You can’t even call it a culture problem. Because it is a structural gap.

I wrote about it in more detail here:

It runs through the enterprise the same way the gap between regulation and reality runs through the EU AI Act itself. Both sides are working hard inside frameworks that were designed for something simpler than what they are now trying to govern.

So what are your actual options?

Given documented bias in frontier models and sponsor or state incentives, four paths exist. They are not mutually exclusive.

Option 1: Use enterprise-grade proprietary models, but govern them hard.

You treat OpenAI and Anthropic as black-box vendors. You only use their business products and APIs - where your data is off training by default, retention is bounded and ads are not part of the product. Consumer ChatGPT never touches an enterprise workflow. Contractually, you prohibit ad insertion, third-party data sharing and use of your prompts for model improvement.

On top of that, you route all traffic through a governance gateway - Bifrost or equivalent - so every prompt and response passes through your own infrastructure. You classify data, redact sensitive fields, set spend limits and generate audit logs. You then connect this to a lifecycle platform like Credo AI, IBM watsonx.governance or OneTrust, which tracks every AI system you run, maps it to regulatory requirements and monitors behavior continuously.

This option accepts you cannot see inside the model. It relies on three things instead: policy guarantees on your data, contractual constraints on monetization, and your own technical controls on what leaves your perimeter.

It works when the capability of top-tier models justifies the residual opacity.

Option 2: Host the model yourself.

You decide that any frontier provider’s incentives are unacceptable. You want the weights. That means deploying open-source LLMs in your own cloud tenancy or data center. Or buying closed-source models that run in isolated instances with no provider-side training. You fine-tune on your domain data under your own privacy rules. You still pair this with governance tools, because a private deployment does not remove hallucination risk or internal bias.

The upside: no third-party ad inventory, no state-level data exposure, no monetization layer you cannot see.

The downside: you now own model operations, evaluation and security.

Most enterprises underestimate this. And what they underestimate is not the technical complexity - it is the positional rarity. Running a private model stack properly requires people who sit across engineering, governance and business risk at the same time. Those people exist. They are just positioned wrong in almost every org chart - rewarded by neither the speed metric nor the compliance audit, and visible only when something breaks.

Option 3: Treat all upstream models as untrusted. Build a control plane that assumes this.

This is where most serious enterprises end up in 2026. You accept that every upstream model - even enterprise-grade - is a component you do not fully control. So you route all traffic through a gateway that can switch between OpenAI, Anthropic, a private model, or any combination, based on policy and use case.

No single provider can hold you hostage to its failure modes.

You keep your knowledge and decision logic in layers you own: a RAG pipeline over your own vector store, deterministic rules for pricing and compliance, the LLM used only as a language engine over your trusted data. Governance platforms sit above this and watch what each model does across all providers - catching drift, flagging divergence, enforcing policy.

This does not fix the model. It shrinks the surface where the model’s own incentives and bias can operate. If a model is biased toward sponsored options in consumer contexts, your gateway simply does not send sponsor-adjacent tasks to that model. Or it sends the output to a second model for verification before anything leaves your perimeter.

Option 4: Use governance as a procurement filter, rather than a runtime tool.

Whatever mix you choose above, governance starts before deployment.

Modern platforms support third-party AI governance: structured workflows to assess external vendors, map them to regulations and track your obligations over time.

A vendor that monetizes via ads gets marked “consumer product - prohibited.”

An enterprise API gets marked “conditionally allowed under these constraints.”

Those decisions get enforced technically at the gateway, not simply documented in a policy PDF.

This is how you operationalize the intuition that you cannot fully trust AI. You assume it is not trustworthy. You build a system that behaves correctly around it.

Where this logic still has gaps

Let’s be honest about what we don’t know.

There are no large-scale, independent audits of enterprise API endpoints for ad-like bias. The Princeton/Washington study tested models under sponsorship prompts - general and consumer-facing contexts. No equivalent study has tested whether OpenAI’s enterprise API injects sponsor-favoring behavior into a bank’s private customer-service workflow. That evidence does not exist yet. The absence of proof is not proof of absence.

We also have no long-term data on how often enterprise deployments accidentally leak data or misconfigure training protections. Security researchers and consultants document specific cases - memory-related exposures, misconfigured logging, third-party SaaS integrations that bypass API policies.

But there is no systematic picture. That gap does not go away with better architecture. It shrinks. You manage it, you do not close it.

So here is the more honest summary.

You cannot trust the neutrality or long-term incentives of any large model provider - especially in consumer products.

You can reasonably trust enterprise APIs and private deployments for well-scoped use cases, when backed by contracts, strong governance tooling and an architecture that treats every upstream model as untrusted by default.

That is the line between paranoia and negligence.

And right now, most enterprises are nowhere near it.

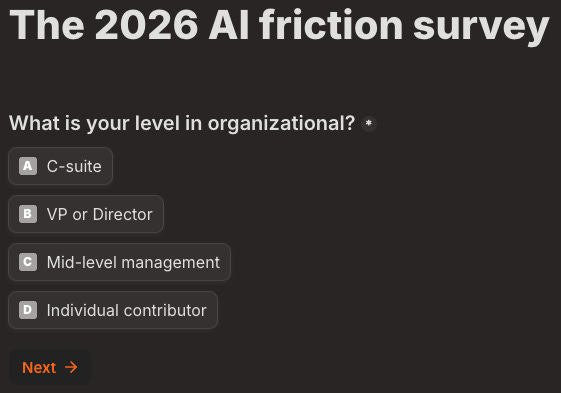

Before we get to signals - a four questions survey. It takes 30 seconds to answer.

Signals to watch

An enterprise AI failure gets blamed on governance, not the model

Every public AI failure today is still framed as a hallucination - a model defect, the vendor’s problem. That framing is starting to crack. Sullivan & Cromwell’s own post-incident analysis called it a supervision failure: policies existed, were not followed.

A French court rejected AI-drafted submissions as a legal competence failure, not a technical one. Forbes put it plainly: “the lawyer did not catch the hallucinations - therefore the lawyer committed a human error.”

The reframing has reached legal practice. It has not yet reached a bank, insurer or hospital where the question is which tier was used, whether an audit trail existed, whether a consumer endpoint was running in production. Watch for that case. When it arrives, “the model hallucinated” stops being a defence.

Regulated-sector RFP bans third-party model training

The controls are already arriving - through data sovereignty clauses, third-party risk frameworks, contractual documentation requirements. UK financial regulators now require banks to document all third-party AI dependencies contractually, with full enforcement from March 2027.

But no public RFP has yet named it directly. Watch for the moment a bank, insurer or hospital puts “no provider-side model training” in writing as a hard requirement. When implicit distrust becomes explicit policy, the signal has fired.

The governance role gets absorbed by compliance

AI governance is a rapidly growing new tech role in 2026. The hiring wave is real. But the roles are landing inside legal and compliance teams - not across engineering and business risk simultaneously.

That is the tell. Managing the seam from inside one frame is not the same as watching the seam from across two. Watch for whether “AI governance” solidifies as a compliance function or breaks out into something that covers both sides. If it stays inside compliance, the problem described in this article is being managed, but not resolved.

One last thing

These signals reflect conversations I am having with executives right now. Just written down.

If this helped you see a blind spot more clearly - forward it to the person in your organisation who is about to make a one-way decision about AI. They probably haven't read the Princeton study. They probably missed the Reuters number. And they are almost certainly not thinking about which tier they are using.

The advertising part adds a whole different dimension. But even before that I was thinking how strange it is that banks partner with Anthropic or OpenAI, when banks otherwise scrutinize everyone to the bone before giving them a loan. Yet they take on suppliers that are not profitable as partners. I have never understood that strategy.